I started my career coding HTML 4.0 way back in 2000. The web was hot at that time (btw it has become hotter now :)). HTML seemed easy. You write tags and see the changes, no wait time,no fun ;) .

Java was the hot buzz word. Every other person was crazy about learning Java. Applets were kind of geek thing. It was kind of prestige issue to learn Java. Your fellow buddies silently respected you when you showed your new Applet or Servlet.

During my early months of job, I started feeling HTML is only for greenhorns, there wasn't much fun in doing any HTML or say for that matter Javascript. One reason was that the tools used for (D)HTML were spitting too much of

I slowly forgot HTML.

But now, With new HTML 5.0 killer features, nobody can ignore it. It has the capacity to completely replace these Rich Internet Apps be it Flash, Silverlight etc.

It would soon become a standard and entirely change how we write web applications.

Javascript would be new language of the Internet.

Some of the features are just amazing:

1. Offline storage database

2. Drag and Drop

3. Canvas

4. Rich Media tags

& the list goes on..

Check this out:

Demo of HTML5 is in HTML5 How cool is that !!.

And this video on youtube:

May 9, 2010

Java EE6 CDI

Its making harder for Java EE Developers & Architects. Now they have to un-learn and learn new stuff.

Jokes apart -- Java EE 6 with CDI indeed seems to simplify things for the programmer. Less code more work backed by Oracle (i.e. Sun) & Tomcat support coming soon. Finally Spring taught a lesson to Java & Java has learnt it well.

Let me head to the book shop to pick up the book - "Java Development without Spring" By Johan :)

I don't know what my next client would want. Spring or No Spring. Do I have a choice?

May 7, 2010

Bob Martin on Software Craftmanship

After listening to this marvelous session, if any body related to Software development, who writes production code, does not write a unit test (i.e. automated test) for that production code, then he is not a software "professional" --> period.

Everything falls in place, like good design, good architecture when we have a set of test suite. It adds immense confidence to keep improving the software without any "fear" of breaking it. Good test suite == good software.

Craftsmanship is nothing but professional commitment of an individual to deliver "best" software to their client or the employer.

No finger-pointing, no blame-game, no wasting time how this mess got created - just get your hands dirty and start fixing.

Thanks Bob for the lesson.

April 24, 2010

Advice to myself - Choosing Work

These are not in any particular order:

1. Does it give me money

2. Does it give me fame

3. Does it give joy

Of course the last one is the most important one. Often many try to buy the last one by the first one, which is mostly superficial.

If all three come on your way then nothing like it...

March 29, 2010

Reading Code...Software Archeology - 2

This is true for many of us. In college we spend most of the time writing code and the moment we are out in the industry , we join a project team and put in a situation where the majority of the time we spend in reading (others) code.

This is always true at-least in Indian market where the engineers work on the outsource project.

Few other reasons:

1. Most of the companies have a big agenda of "Re-use". They always emphasize and encourage teams to create a librabry re-usable components/assets. Often they track this by metrics on how much your team has shared and how you have reduced cost by using the already available components.

2. There are umpteen number of opensource libraries. Most of them lack documentation. The only to make it work is to open the source and digest it.

3. 80% of the projects that get outsourced are maintenance and enhancement which lack up to-date documentation.

4. Legacy projects

etc

Look at this way,we almost spend about 60-80% of our time in the industry reading code and 15-35% writing code and remaining on other activities but almost all the tools, processes, standards, best practices that are available on the web are for writing and creating new systems but very minimal to read & understand existing code.

What is Software Archeology?

I will leave the veterans and experts of the industry to shed more light on this:

Digging code: Software archaeology - Good overview

Grady Booch on Software Archeology

Listen to Dave Thomas on SE Radio

Article on Java Dev Journal

In my next post, I will share my experiences of diagnosing and understanding a complex Java EE app using some of the tools that I stumbled upon on the web.

March 16, 2010

Reading Code...Software Archeology - 1

This project had some history (of-course not a good one) of being slow, complex, no-documentation, open bugs etc. My luck, I landed in this one. But there is always some scope to learn..be a new project or old project.

Background:

This project was a IBM WAS portal application (one big portlet) built using legacy struts1.1 framework and accessing a set of remotely deployed ejb services. The ejb services project (was configured with 6 datasources) acted as a service provider and the portal project as the consumer of services.

Size wise, the portal app is having 6 very big struts 1.1 action classes (where all the UI logic resides), 11 packages & about 20 KLOC. When I say very big, I mean very big about 5KLOC in each action class.

The goals of this project was to de-portalize i.e. move away from the portal run time - i.e. to save some money on portal licenses & merge the complex ejb services project with this one. This is primarily to strip of ejb layer so that performance is improved and all its services are local. Everything is at one place and so it is more maintainable.

btw, earlier I wanted to use the word - re-architecting but found out that there is no existing architecture in the current project to do that. So instead used another jargon - de-portalizing.. :)

But what this has all got to do with the Topic of this blog..Reading Code...Software Archeology.

You might have guessed based on the above background where I'm heading to..

October 29, 2009

Back…

Downloaded Windows Live Writer..Publishing a sample blog…Hope, this will motivate me to blogging consistently.

Finally some relief and a sense of satisfaction. Txn Engine App got saw the day of the light, it got deployed on production on Amazon EC2 yesterday.

Three months of intense code churning activity has come to an end. No more stand-up meetings :)

Txn Engine is a batch processing application built on Spring Batch. It is deployed on Amazon Ec2 large instance.

Folks of Spring Batch have done a very good job (still doing a it).The documentation on their site is good, sample apps cover most of the needed business and enterprise batch use-cases plus the forum is active and you get quick clarifications and support. Waiting for the release of the web-framework to monitor the batch metadata tables.

June 3, 2009

March 18, 2009

Tracking Requirements using Annotations for Java Projects

Tracking requirement has been always a major challenge in big projects. Requirement Traceability Matrix (RTM) document is never up-to-date. The team looses interest and lacks the rigor to maintain RTM for various reasons.

In this article we will see how to achieve a developer friendly way of tracking requirements directly in the Java source code with the “Annotations”; a new feature introduced in JDK 5.0.

Introduction

Annotations (or Metadata) were formally introduced as part of JSR-175, approved in Sept 2004 into Java 5.0 (JDK 1.5 version).

Annotations were always present since earlier version of JDK but in ad-hoc forms like @deprecated javadoc tag, transient modifier (indicating that a field should be ignored by the serialization subsystem) etc. JSR-175 standardized it and JDK 5.0 gave the developers the power and flexibility to define custom annotations.

What are annotations?

In simple language, annotations are “metadata” added directly to the source code.

This metadata can be retained in the class files generated by the java compiler and are reflective in nature. Classes, methods, fields, packages can be annotated.

What are annotations?

In simple language, annotations are “metadata” added directly to the source code.

This metadata can be retained in the class files generated by the java compiler and are reflective in nature. Classes, methods, fields, packages can be annotated.

Hypothetical Example:

Let’s assume that your company is maintaining a large enterprise application for a client. The application has been delivered a year back and the application is currently in the maintenance phase. The usual maintenance activities are bug fixing, back up activities.

The original developers have been rotated and the majority of maintenance team is relatively new, having a rudimentary knowledge on the application.

Now after a year or so, the client plans to come up with a next version of the application with a set of new features based on the market feedback, users inputs etc. The client comes up with about 80 – 90 new requirements and requests for a proposal to accommodate these new features in the version 2 of the application.

The version 1 requirements were in a separate word document - outdated. The bug fixes and minor enhancements requirements which had come later were scattered in the team’s mail box, bug tracking tool. The requirements traceability matrix was in a very poor state, pretty outdated. The team lacked the rigor to update this on need basis, always postponing it. The end result: RTM document became so outdated that any wanting developer lacked the motivation to do it.

The Project Leader (who is again new, for whatever reasons) has to respond back to the client with the proposal for these new set of requirements. He and his team are stuck and have no clue where to start, how to find which requirement impacts which part of the code.

The above is a little dramatized example, but I have seen such cases.

Although the team consists of sharp developers, they do not show the same rigor in updating the RTM document as they show the passion for coding.

How do the annotations help?

It is the developer friendly approach of maintaining the requirements as “metadata” as part of the source code. The code review process can ensure this.

Tracking requirements using annotations directly in the code helps:

1. Requirements are always in the current state

2. No need of RTM

3. Reports on the requirements can be generated on the current source code

4. Impact analysis / estimation of new features and tasks would be easier

5. No need to invest, manage, train in an additional commercial tool

6. One place maintenance

Moving on

Firstly, let us define an annotation, which will be used by end users i.e. developers.

Annotations are very similar to Java interface definitions. Notice the @ symbol before the interface keyword. In the above code snippet, we have defined an annotation by name Requirement (or it could be named as Feature, in agile lingo). Most of the properties defined above are self explanatory. Basically the properties defined above are to track which use case, project, module does this requirement belong, whether it is a high priority or a functional kind of requirement etc.

Example in use

Let us define a simple Java interface & apply the Requirement annotation.

The above code snippet is pretty much self explanatory; shows how the code would look when Requirement annotation is applied to a Java interface WorkDaysCalendarCalc with two service methods: isWorkDay() & getWorkDayNumberInCurrentYear(). Also notice that some of the properties like projectName, type of Requirement annotation etc have default values. These need not be declared.

Annotation Processing

The next step would be to process these annotations. Running the annotations is not done with the usual java command, but with a tool called apt (annotation processing tool) introduced in Java SE 5.0.

Java SE 6 has this capability built it in the compiler, thanks to JSR-269.

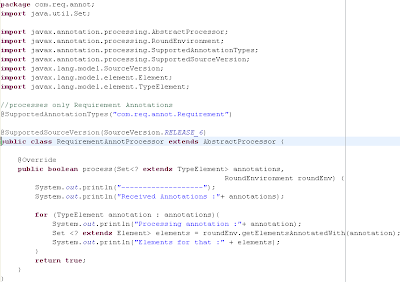

Annotation processors in Java 6.0 have to extend AbstractProcessor of javax.annotation.processing package and override the process method.

The above RequirementAnnotProcessor processes only those Java files which use Requirement annotation. This restriction has been put by @SupportedAnnotationTypes("com.req.annot.Requirement").

javac compiler calls the process method of our RequirementAnnotProcessor whenever it encounters any Java file which uses or imports com.req.annot.Requirement annotation.

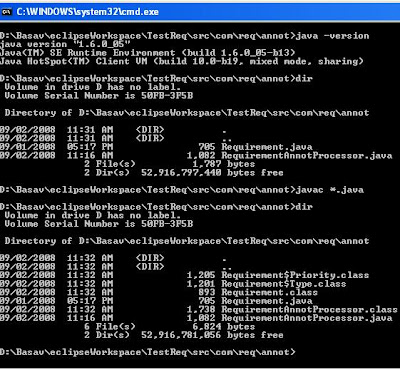

The first step would be to compile out RequirementAnnotProcessor.java.

The below figure should give an idea on the package structure.

Open a command prompt, and navigate to the package com.req.annot and compile RequirementAnnotProcessor.java. Please see the below image for more details.

Output

The next step after compiling the Annotation processor would be to process the Java file, WorkDaysCalendarCalc.java, which uses our Requirement annotation.

Navigate to the src folder and issue the following command:

D:\Basav\eclipseWorkspace\TestReq\src>javac -processor com.req.annot.RequirementAnnotProcessor com\test\service\WorkDaysC

alendarCalc.java

Here -processor switch tells javac to use annotation processor before compiling the code

You should see the following output:

-------------------

Received Annotations :[com.req.annot.Requirement]

Processing annotation :com.req.annot.Requirement

Elements for that :[com.test.service.WorkDaysCalendarCalc, isWorkDay(java.lang.String,java.util.Date), getWorkDayNumberIn

CurrentYear(java.lang.String,java.util.Date)]

-------------------

The process method of RequirementAnnotProcessor is called for each Java file containing Requirement annotation, in out case WorkDaysCalendarCalc and prints the elements (classes, methods etc) which use the Requirement annotation.

The process method is the worker bee of any annotation processor. In the above rudimentary example, the process is just printing all the elements which declare out Requirement annotation. This method would be actually enhanced to generate an xml file for e.g. something like the below structure. This is just an indication; the output report can be customized based on the project needs.

Conclusion

To sum up, this is an attempt to explore the possibility of tracking requirements and keeping the requirements as up-to-date as possible using annotations. From past couple of years agile fever is catching up. Lots of Java projects are heading the agile way, with shorter iterations, less up front design and diving directly into the code with small set of initial requirements, taking baby steps. This would definitely add a step to the developers but the returns would be huge.

Thanks for reading.

References

http://java.sun.com/docs/books/tutorial/java/javaOO/annotations.html

http://java.sun.com/j2se/1.5.0/docs/guide/language/annotations.html

http://www.jcp.org/en/jsr/detail?id=175

http://www.jcp.org/en/jsr/detail?id=269

May 23, 2005

Approach to developing Resource Adapters using J2CA

The below section is part of my write-up given to my boss on JCA. The first part is on Overview of JCA, which I did not think was that important/cruicial to post it here. You can find lot of primers on J2CA. The seeker seeks it.

The second part of the write-up is bascially the title of this blog, which is based on my limited experience and knowledge.

Here it goes...

Approach to developing Resource Adapters using J2CA

JCA is an interface definition. There are no specific guidelines or approaches/reference implementations provided by SUN in creating resource adapters.

The approaches broadly fall under two categories:

- Non-Framework based approach

- Framework based approach

Before we decide on one of the above approaches, it is recommended to have a technology proof of concept (POC) implementing a basic adapter to connect to each type of EIS to mitigate any technical risks as early as possible.This is important as we are integrating J2EE systems with disparate Legacy /EIS systems. This technology POC single out & eliminate main elements of risk. The goal can be to implement a single requirement be it a connection related or transaction related using the technology that we would like to prove

The below outline of development approach for building resource adapters apply

- Research EIS requirements

Identify the required EIS and appropriate services that need to be exposed

Identify the expensive Connection object

Implement a POC

Identity security needs

Identity type of transaction (XA-Txn, Local Txn or No Txn)

- Development environment configuration

Set up file/directory structureSet up build proces

- Implement Service provider interface

Implement the interfaces that comprise the SPI, at least the following three interfaces:

ManagedConnectionFactory, which supports connection pooling by providing methods for matching and creating a ManagedConnection instance.

ManagedConnection, which represents a physical connection to the underlying EIS

ManagedConnectionMetaData, which provides information about the underlying EIS instance associated with a ManagedConnection instance

- Implement Client Connection Interface (optional)

Implement the interfaces that comprise the CCI including the Connection interface and the Interaction interface

- Package the Adapter

Package the adapter into rar file.

Deploy the Adapter on the target application server platform

- Test Adapter

Test the adapter for functionality & performance on desired platforms/EIS versions

- Release the adapter

Non-Framework based approach

This approach is primarily useful if we are implementing only one of type of Resource adapter. Here everything needs to implemented from scratch i.e. all the outlined steps above are executed. If an adapter factory is envisaged, the framework based approach is recommended.

Framework based approach

When there is a need to create resource adapters for more than one EIS, development effort can be greatly reduced by defining a generic adapter framework. This is because the common features applicable for all adapters can be built into the framework.

The framework can also implement some default behavior which can be extended as needed by the developer. For example, the JCA specification requires that the InteractionSpec implementation class provide getter and setter methods that follow the JavaBeans design pattern. To support the JavaBeans design pattern, support for PropertyChangeListeners and VetoableChangeListeners in required in the implementation class. The framework can take care of this and other low level details allowing the adapter developer to focus on implementing the EIS-specific details of the adapter.

The common features for the resource adapters that can be implemented by the framework include but are not limited to

- Basic support for internationalization and localization of exception and log messages for an adapter

- Logging tool kit support

-that allows you to log localized messages to multiple output destinations. - Getter and setter methods for standard connection properties (username, password, server, connectionURL, and port) as the connection properties are common to all the EIS

- License checking facility (applicable for EIS vendors who market the adapters)

- Default Connection event listeners for logging connection-related events

- Simplifying the clean-up and destruction of Connections

-destroying Connection instances when a connection-related error occurs - Exception handling for

-providing a generic exception handling - Base implementation of the primary Interfaces for abstract implementation of the interfaces

Instead of investing lot of effort and time in planning/designing a custom framework from scratch, there are many frameworks available from popular application server vendors which can be used right away. For e.g. BEA has Adapter Development Toolkit, IBM has its own Connector framework; SUN has Sun ONE Connector Builder etc. These are best used for their respective application servers as they are designed for those and there can be difficulty in porting to other application servers. But definitely a lot can be benefited by studying these frameworks’ documentation which can aid in building your own custom framework.

In conclusion, the approach depends on factors such as business drivers, time to market, complexity, and available developer skill set etc. Having a framework in place adds structure and consistency to the process and product, reducing recurring development/enhancements and maintenance costs.

May 16, 2005

Classic Mistakes of Testing

Here it goes...

A first major mistake people make is thinking that the testing team is responsible for assuring quality. This role, often assigned to the first testing team in an organization, makes it the last defense, the barrier between the development team (accused of producing bad quality) and the customer (who must be protected from them).

It's characterized by a testing team (often called the "Quality Assurance Group") that has formal authority to prevent shipment of the product. That in itself is a disheartening task: the testing team can't improve quality, only enforce a minimal level.

Worse, that authority is usually more apparent than real. Discovering that, together with the perverse incentives of telling developers that quality is someone else's job, leads to testing teams and testers who are disillusioned, cynical, and view themselves as victims.

We've learned from Deming and others that products are better and cheaper to produce when everyone, at every stage in development, is responsible for the quality of their work ([Deming86], [Ishikawa85]).

In practice, whatever the formal role, most organizations believe that the purpose of testing is to find bugs. This is a less pernicious definition than the previous one, but it's missing a key word. When I talk to programmers and development managers about testers, one key sentence keeps coming up: "Testers aren't finding the important bugs." Sometimes that's just griping, sometimes it's because the programmers have a skewed sense of what's important, but I regret to say that all too often it's valid criticism. Too many bug reports from testers are minor or irrelevant, and too many important bugs are missed.

Now for the complete article on classic mistakes, click here.

May 10, 2005

Hibernate Vs EJB 2.1(Entity Beans)

I was planning to write on System Deisgn worries, but my boss urgently requested me to write something abt EJB and Hibernate , their differences based on my experience and doing some googling. This was urgently required for some proposal.

I finished this 2 pager and thought that I will share it with you all. It might be of some help.

Hibernate 3.0 & EJB 2.1 (CMP)

Hibernate is a powerful, ultra-high performance Object/Relational (OR) persistence and query service for Java.

Hibernate

- integrates elegantly with all popular J2EE Application servers and Web containers with out any restrictions.

- can also be used in stand alone Java applications.

- supports and implements the EJB 3.0 (JSR 220) persistence standardization.

This comparison between Hibernate and EJB 2.1 is strictly with CMP Entity beans and not with EJB Platform per-say. EJB Platform (Session Beans/ MDBs) offer many advantages such as componentization, remote access to applications, variety of clients support (java/CORBA etc) and asynchronous message model.

The EJB persistence mechanism (CMP Entity beans) has many issues/disadvantages

- they are heavy weight components

- high runtime overhead

- have a poor track record on the performance

- cumbersome to develop, lot of interface definitions limiting developer productivity

- Bean partitioning (Each bean a row in some table/Not every row of every table a Bean)

- Vendor dependent CMP optimizations can help performance, at cost of portability

- Inheritance not supported

- Cannot be used for persistence in non-application server environments.

- There is no dynamic query mechanism to lookup entity beans (finders are specified at compile time).

- It is not easy to write unit tests for beans as it is not possible to use them outside of the application server.

- No support for automatic primary key generation.Only relational databases are supported

Hibernate Features/Advantages

Hibernate is open source product (similar to Struts and Log4j) and not vulnerable to any vendor lock-in and is supported by JBoss Inc.

- Hibernate works on POJO principles and it is light weight

- Hibernate is much more easier to use than handwritten SQL/JDBC (i.e. BMP Beans) and much, much easier to use and much powerful than Entity Beans 2.1

- Hibernate always executes SQL statements using a JDBC PreparedStatement, which allows the database to cache the query plan.

- Hibernate is able to implement certain optimizations (caching, outer join association fetching, JDBC batching, etc.) much more efficiently than typical handwritten JDBC.

- You may use Hibernate from servlets or Struts actions, or from behind an EJB session bean facade. In a CMT environment, Hibernate integrates with the JTA Datasource and TransactionManager, as well as JNDI.

- Hibernate has a very sophisticated second-level cache architecture and supports pluggable cache implementations.

- Hibernate supports composite keys

- Hibernate supports instance variables persistence i.e. Java Beans Style properties like getter/setters

Use-ful links:

May 5, 2005

J2EE Architect Worries-Contd..

2. System Design:

- Scoping Requirements

- System Interfaces

- Reviews of UML Artifacts

- Functional Documents

- Data Modeling

3. Architectural Artifacts:

- Functional/Logical System Model

- Physical System Model

- Component/Packaging Model

- Third Party Service Providers and Component libraries

- Application Framework

- Data Management Strategy

- System Transactional requirements

- Application Integration & External Systems

- Security Requirements

- Performance Requirements

- Internalization requirements

- System Transition Strategy

4. Environments:

- Development Environment

- Integration testing environment

- User Acceptance testing environment

- Production Environment

5. Development (my favourite one):

1. Application Framework

2. Coding Standard

3. Use Case Realizations with UML artifacts and functional documents

4. Software Configuration Management

5. Development environment

6. Daily development activities

7. Unit Testing Framework

8. Trainings & Sessions

more later..first I need to elaborate on each of the above one ....

May 4, 2005

What an J2EE Architect needs to worry about?

-------------------------------------------------------------------------------------

The best architects are good technologists and command respect in the technical community, but also are good strategists, organizational politicians (in the best sense of the word), consultants and leaders.

(I have taken this from here. This is a good site on Software Architecture Discipline)

Here is the list:

1. Methodology

2. System Design

3. Architectural Artifacts

4. Environments

5. Development

6. Build and Deployment

7. Testing

8. Tools

9. Miscellaneous Deliverables

Lets take one by one.

1. Methodology

a. Rational Unified Process (RUP)

b. Agile Methodologies

b. Xtreme Programming (XP)

c. Crystal

d. Feature Driven Development

c. Hybrid of RUP and XP

a Rational Unified Process (RUP)

The Rational Unified Process collects many of the best practices of OO analysis and design to form a process framework with 38 different artifacts. RUP is not generally considered lightweight, although a lightweight configuration called dx (“xp” turned upside down) exists. Of course, not all 38 artifacts are required in either RUP or dx. In fact, the process framework is configurable to as few as two (use cases and code) artifacts. However, the general RUP-based process uses quite a few requirements, analysis, and design artifacts because its developers based this process on the activities of the OOA/D movement.

b. Agile Methodologies

c. Xtreme Programming (XP)

Extreme Programming has been the pioneer in the modern movement toward lightweight processes. XP emphasizes a single major artifact, the code itself. This process uses 3” x 5” cards to capture requirements in user stories and design via CRC (class, responsibilities, and collaboration) cards, the minor artifacts of the process. XP is much more than user stories, CRC cards, and coding, however. Testing frameworks and innovative practices such as pair programming (working in groups of two people) make XP an interesting addition to the field of software development processes

d. Crystal

Crystal is a lightweight process that contains 20 artifacts. This might sound like a heavier process than XP but most of the artifacts are informal and can take the form of “chalk talks” (working problems out on a chalk board), conversations, and e-mails. Of these 20 artifacts, only the final system, the test cases, and the documentation are formal. Crystal divides its artifacts into levels of precision (20,000-foot view, 5,000-foot view, 10-foot view) to allow developers to focus on their objectives.

e. Feature Driven Development

Feature-Driven Development is an incremental approach that uses as few as four artifacts (feature list, class diagram, sequence charts, and code). The FDD process focuses development using two-week iterations to show quick tangible results. Among the contributions this process provides is a semantic-based class diagram template—called the domain neutral component, which differentiates types of classes by color—to aid class designers in developing a domain model.

f. Hybrid of RUP and XP

Secure Socket Layer

Introduction

HTTPS is HTTP running over Secure Sockets Layer (SSL).

SSL (now up to version 3.0) is a standard protocol proposed by Netscape for implementing cryptography and enabling secure transmission on the Web

The primary goal of the SSL protocol is to

- provide privacy and reliability between two communicating parties.

The two security aims of SSL are

- To authenticate the server and the client using public key signatures and digital certificates.

- To provide an encrypted connection for the client and server to exchange messages securely

SSL runs at the application layer.

SSL uses

- certificates,

- private/public key exchange pairs and

- Diffie-Hellman key agreements

In SSL,

- Symmetric cryptography is used for data encryption

- Asymmetric or public key cryptography is used to authenticate the identities of

the communicating parties and encrypt the shared encryption key when an SSL session is established.

SSL is comprised of three protocols:

- record protocol

- handshake protocol

- alert protocol

The record protocol defines the way that messages passed between the client and servers are encapsulated. At any point in time it has a set of parameters associated with it, known as a cipher suite, which defines the cryptographic methods being used.

The handshake protocol runs on top of the SSL Record protocol. It defines a series of messages in which the client and server negotiate the type of connection that they can support, perform authentication, and generate a bulk encryption key. During a typical SSL session, the server and client exchange several Handshake protocol messages during the transaction. Depending on the chosen encryption type, a server using the SSL protocol uses public-key encryption technologies to authenticate itself to the client.

The alert protocol also runs over the SSL Record protocol. The SSL Alert protocol signals problems with the SSL session ranging from simple warnings (e.g., unknown certificate, revoked certificate, expired certificate) to fatal error messages that immediately terminate the SSL connection. For example, you might receive the You are about to leave a secure Internet connection warning because an SSL client received a closure_notify alert from an SSL server.

Operation of SSL

The client initiates an HTTP request for an SSL tunnel calling HTTPS directly.

By default, SSL uses a number of ports including 443, 643, 1443 and 2443.

For encryption SSL uses

- RC4-128,

- Diffie-Hellman 1024,

- MD5 and

- Null.

The encryption is carried out at layer 4 i.e. the socket layer.

The major elements in an SSL connection are:

1) The cipher suites that are enabled

2) The compression methods that can be used (the compression algorithms are used to compress the SSL data and should be lossless)

3) Digital certificates and private keys, used for authentication and verification

4) Trusted signers (the repository of trusted signer certificates, used to verify the other entities’ certificates)

5) Trusted sites (the repository of trusted site certificates)

SSL Handshake

The steps involved in an SSL transaction before the communication of data

begins are described in the following list:

1) The client sends the server a Client Hello message. This contains a request for a connection along with the client capabilities, like the version of SSL, the cipher suites and the data compression methods it supports.

2) The server responds with a Server Hello message. This includes the cipher suite and the compression method it has chosen for the connection and the session ID for the connection. Normally, the server chooses the strongest common cipher suite. If the server is unable to find a cipher suite that both the client and server support, it sends a handshake failure message and closes the connection.

3) The server sends its certificate if it is to be authenticated, and the client verifies it. Optionally the client sends its certificate and the server verifies it.

4) The client sends the ClientKeyExchange message. This is random key material, and it is encrypted with the server’s public key. This material is used to create the symmetric key to be used for this session, and the fact that it is encrypted with the server’s public key is to allow a secure transmission across the network. The server must verify that the same key is not already in use with any other client. If this is the case, the server asks the client for another random key.

5) When client and server agree on a common symmetric key for encrypting the communication, the client sends a ChangeCipherSpec message indicating the confirmation that it is ready to communicate. This message is followed by a Finished message.

6) In response, the server sends its own ChangeCipherSpec message indicating the confirmation that it is ready to communicate. This message is followed by a Finished message.

7) Client and Server exchange the encrypted data.

The problems associated with SSL are:

- It prevents caching.

- Using SSL imposes greater overheads on the server and the client.

- Some firewalls and/or web proxies may not allow SSL traffic.

- There is a financial cost associated with gaining a Certificate for the server/subject device.